Programmes using the DCED Standard for Results Measurement all face this challenge, and two shared their experiences in a webinar. This web page summarises some of the suggestions from the webinar – many more are available in the recording:

Recommendation 1: Do less results measurement

We often see programmes which collect more data than they need.

Focus measurement on successful areas

If the intervention doesn’t work, we don’t need to measure its impact! Some interventions never gain traction – perhaps the partner isn’t interested, or consumers aren’t buying, or suppliers don’t participate. In this case, a good monitoring system should catch the problem early on, saving you the expense of a large survey.

Other interventions work, but only with a small number of beneficiaries. You may conclude that there is limited potential for scale, and a rigorous survey might be unnecessary. Again, a good monitoring system should give you the data you need to assess the value of an expensive evaluation.

A 2016 review found that “within a typical programme, only a handful of interventions will ever reach scale.” You can save a lot of money on results measurement by concentrating resources on a small number of successful interventions.

Reduce information needs

When programmes monitor interventions better, they know more and there are fewer gaps to fill through expensive research. Programmes often have a tendency to separate results measurement from programme implementation. As a result, learning is often not maximised. For example, when an intervention team is managing an intervention, they are out in the field, and collect a lot of information. So if the RM team sits together with them and analyses the information that they already have, they can identify where the research gaps are. So they may even conclude that there is already enough data from the partner and no need for a separate visit. So information needs are reduced.

Another common pitfall that programmes fall into is spending a lot of time defining many indicators which then need to assessed. Defining only the essential indicators can help a programme to save time; for example:

| Change | Essential indicators | Not essential indicators |

|

|

|

Reduce spending on baselines and control groups

Programmes often want to know when they should do baselines, how big the sample should be, and whether they should be outsourced.

Programmes often want to know when they should do baselines, how big the sample should be, and whether they should be outsourced.

Generic baselines that aim to interview a representative sample of all enterprises in a sector prior to the intervention beginning can be a waste of money. Firstly, because you don’t know exactly what activities will be conducted, and so do not know what exactly to measure. Secondly, because in private sector development programmes, beneficiaries are self-selecting. You won’t know who is participating in the intervention until some way into implementation. For example, there is no way to know who will purchase from an input company until they have actually done so.

On the other hand if a large baseline has already been done or there are secondary data available, it can be useful to analyse the data you have, to see whether part of it can be used as a relevant baseline (e.g. by isolating a sample from a particular location if beneficiaries are there, etc.)

Instead of doing one large baseline, its also more useful to conduct smaller, targeted baseline that can be done in-house. Often it is possible to collect retrospective baseline data towards the end (with a certain amount of caution). This is particularly useful for interventions with short time-frames, such as those working with rapid crop seasons or in urban environments. Alternatively, if you have a robust control group, you could skip the baseline and simply do a survey at a single point in time of your control and treatment groups.

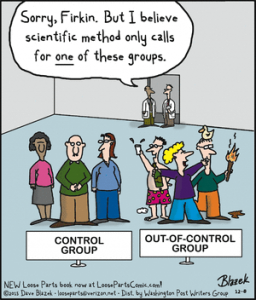

Using control groups is also expensive, as they typically double the data collection costs, and need specialist expertise to ensure comparability with the treatment groups. They are not always necessary. The DCED provides a tool to help select methodologies for assessing attribution. Of the four methodologies included, only two require control groups. Qualitative methodologies for assessing attribution can be very effective and require far fewer resources.

Recommendation 2: Do better results measurement

This section describes two ways in which you can improve the quality of your measurement; by investing in your team and using better research and data collection methods.

Investing in your team

On the left is Gareth Bale, who used to play for Tottenham Hotspur. Tottenham bought him for a fee of seven million pounds and sold him six years later for about fifteen times that price. You might not have seven million pounds to spend on your results measurement team, but Gareth Bale illustrates that, if you hire the right staff and invest in them, you could save considerable amounts of money.

On the left is Gareth Bale, who used to play for Tottenham Hotspur. Tottenham bought him for a fee of seven million pounds and sold him six years later for about fifteen times that price. You might not have seven million pounds to spend on your results measurement team, but Gareth Bale illustrates that, if you hire the right staff and invest in them, you could save considerable amounts of money.

Firstly, train the whole team (not just a dedicated MRM unit) in monitoring and results measurement. The intervention team is a valuable source of monitoring data. They meet with partners, visit field sites, and complete reports. If the intervention team know what monitoring they should be doing, then the specialist MRM team does not need to duplicate these efforts. Instead, it can focus on quality-assuring the measurement and conducting bigger, more complex assessments.

Secondly, build in-house capacity to do research. Conducting large-scale research is often one of the biggest costs of an MRM unit. External research firms are expensive and difficult to manage. Instead, build the capacity to manage research internally. This requires training the MRM team in survey planning and analysis, and finding trusted enumerators who can collect the data. As well as saving money, this has the additional benefit that the knowledge from data analysis will be kept in-house, rather than disappear once the contract with the research firm finishes.

Programmes also often spend a lot of money on hiring multiple consultants throughout the timeline of a programme to help in different aspects of results measurement. It is much more cost-efficient in the long run to have the same person involved throughout. That way, the expert can come initially and understand the programme and also help train people in-house. Later the same person can also continue providing support, sometimes in person and sometimes even remotely – saving the need for explaining the context to a new person and also saving money.

Improving research and data collection methods

There are three ways in which you can improve your research methods and save money in data collection.

The first is to use mixed methods, collecting both qualitative and quantitative data. Different types of data have various strengths and limitations. Quantitative data, for example, are very useful when you want to understand scale – like understanding what percentage of farmers buy a new type of seed. Qualitative methods are better at explaining results, for example by showing why farmers do (or do not) buy seed. Using them together overcomes the limitation of either method and can increase the reliability of your research. Understanding why change happens through qualitative research can also help answer questions of attribution, reducing the need for a control group.

Secondly, good sampling techniques can reduce sample sizes while still providing sufficient confidence in your data to make an informed decision. If you can estimate the likely size of your impact, for example, you might be able to reduce the sample size while still being able to answer questions about intervention impact. If you can reduce the number of sub-groups you want to analyse, you can also dramatically reduce your sample size. You can find out more on the DCED’s guidance on sample sizes.

Finally, using mobile phones to collect data makes it easier to maintain quality when using enumerators and saves a lot of time and money in data entry and analysis. Kobo Collect is a free tool, downloadable on any Android phone, which makes it relatively simple to set up and analyse survey data.

Conclusion

There’s no such thing as a free lunch. Good MRM will always cost money. Using the above techniques, however, can reduce the amount of expensive survey work, and increase the quality and efficiency of your data collection.

It will also help if you are planning on having an audit of your MRM system. The DCED Auditors take a pragmatic approach to MRM, focusing on how well it is done rather than how much is spent. While we can’t guarantee that the advice above will help to pass an audit, the auditors will certainly look for practical and effective results measurement systems.

If you think we missed out anything important, please send us an email!